LLM-D: Serving AI Inference at scale

I am a Kubernetes Engineer passionate about leveraging Cloud Native ecosystem to run secure, efficient and effective workloads.

Introduction:

AI inference is the "doing" part of artificial intelligence. It's the moment a trained model stops learning and starts working, turning its knowledge into real-world results.

We all use cutting-edge frontier models in our day-to-day use like Gemini, Claude etc. In our personal life we don’t interact with open-source, purpose built models like Qwen, Llama etc that often who have smaller parameters and often fine-tuned for specific tasks. But for enterprises, there is a growing demand to use these type of models internally. Whether it is to serve models fine-tuned on proprietary data, or for agentic purposes like tool-calling at scale, understanding-intent etc. This creates a unique use-case of using cloud-native frameworks like KServe and llm-d to put forward best-practices to serve AI inference

LLM-D

llm-d: a Kubernetes-native high-performance distributed LLM inference framework

llm-d is an open-source inference framework which offers battle-tested configurations to start our model deployment journey. Rather than using vanilla Kubernetes to run vLLM pods in accelerated hardware, llm-d offers better performance metrics by solving problems like KV-Cache offloading, separation of prefill and decode phases(Prefill/Decode Disaggregation), Intelligent inference routing etc. These features helps us in serving LLM models at scale. One example is, by separating prefill and decode phases, we can deploy prefill pods in compute-bound hardware and decode pods in memory-bound hardware. Also by tweaking the PD ratio of pods, you can achieve smaller TTFT(time to first token) and TPOT(time per output token). The llm-d framework have many well-lit paths to solve specific problems depending on the type of QPS(queries per sec) workloads and models.

Architecture

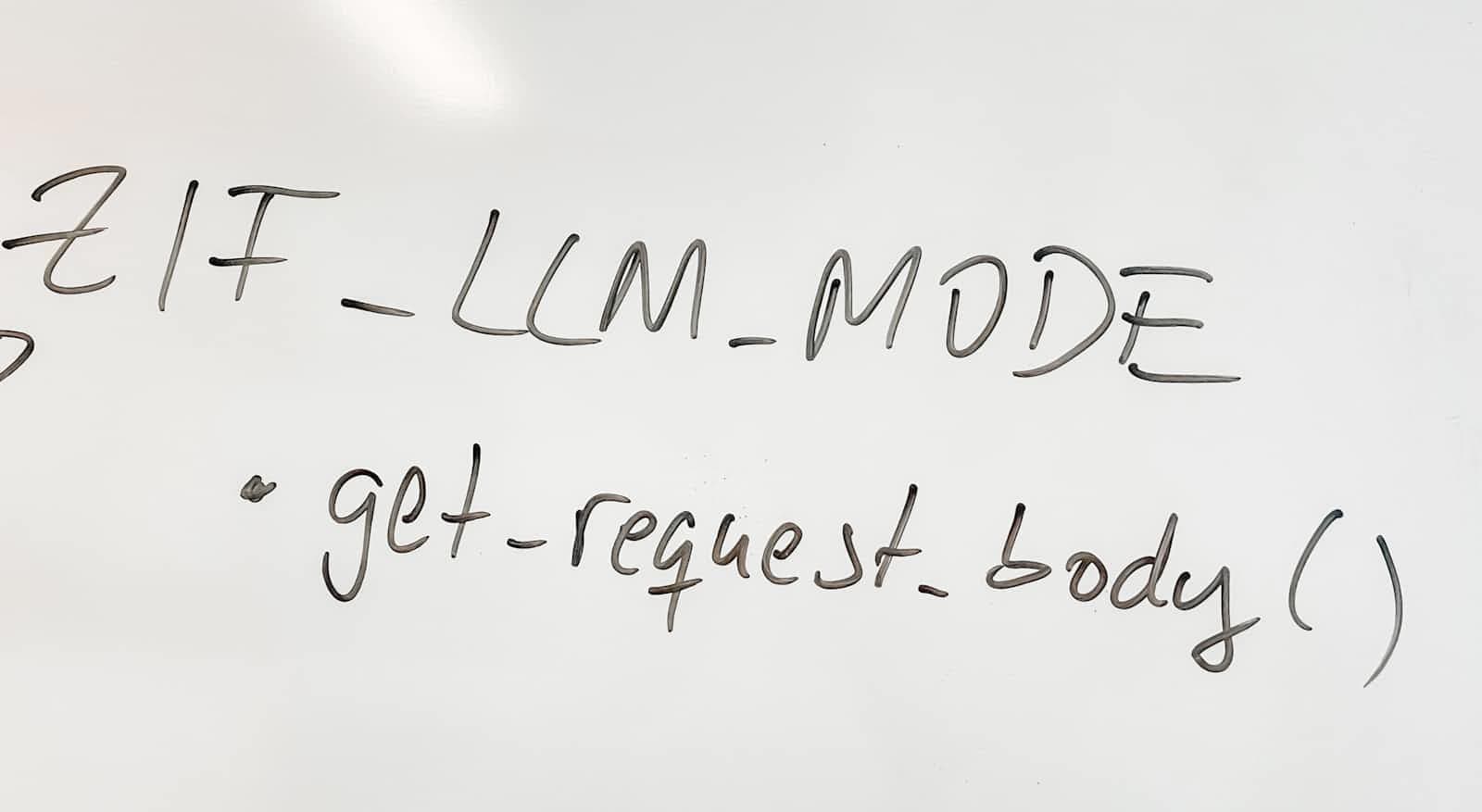

llm-d architecture have some novel components like GIE(GatewayAPI Inference Extension) and EndPointPicker(EPP) which uses filtering and scoring techniques to enable Gateway API to route traffic dynamically to the next best pod.

Here is a detailed infographic on inference scheduling

Testing Inference Scheduling

I have deployed a GKE cluster using Nvidia L4 instance and some general compute for all other pods. Firstly you need to enable GKE Gateway class in-order for llm-d to create Gateway during helm install. Here is a how-to for that. Once it is done, you need to install monitoring stack to observe the metrics on vLLM pods. llm-d github page have a bash script to deploy kube-prometheus-stack. The I have installed the helmcharts to deploy modelserver(ms) pods in L4 GPU instance.

llm-d-inference-scheduler gaie-inference-scheduling-epp-5dc59b4767-dv8tx 1/1 Running 0 51m

llm-d-inference-scheduler ms-inference-scheduling-llm-d-modelservice-decode-5b7bb867kxmff 0/2 Pending 0 27m

llm-d-inference-scheduler ms-inference-scheduling-llm-d-modelservice-decode-5b7bb867zxgnm 2/2 Running 0 32m

llm-d-inference-scheduler ms-inference-scheduling-llm-d-modelservice-decode-77f5d7b89bc7n 0/2 Pending 0 31m

Then I have deployed httproute to connect my InferencePool to the Gateway.

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: llm-d-inference-scheduling

spec:

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: infra-inference-scheduling-inference-gateway

rules:

- backendRefs:

- group: inference.networking.k8s.io

kind: InferencePool

name: gaie-inference-scheduling

weight: 1

matches:

- path:

type: PathPrefix

value: /

Then I could run some load tests on the single vLLM pod that I managed to deploy through the external gateway ip.

Conclusion

All the technologies like llm-d, KServe and GIE are still going through rapid progression. LLM inference poses unique challenges which can be tricky to solve. In the future where there will be massive consumption of tokens by applications, you cannot pay per-token price while it is just an additional feature in your application(imagine booking uber using voice). In those circumstances, frameworks like llm-d is the best alternative.